What AI Hypists Miss

These systems still struggle with implementing real world solutions.

We’re delighted to announce that our online BOOK CLUB is back! You can meet authors and ask questions about their work, as well as meeting other readers. Please join us on Tuesday, April 7 at 6pm ET, when our Head of Podcasts, Leonora Barclay, will interview Russell Muirhead and Nancy L. Rosenblum about their book Ungoverning: The Attack on the Administrative State and the Politics of Chaos. Register your interest here.

Recently I heard a presentation by an engineer from OpenAI about the incredible transformations that will occur once we get to artificial general intelligence (AGI), or even superintelligence. He said that this will quickly solve many of the world’s problems: GDP growth rates could rise to 10, 15, even 20 percent per year, diseases will be cured, education revolutionized, and cities in the developing world will be transformed with clean drinking water for everyone.

I happen to know something about the latter issue. I’ve been teaching cases over the past decade on why South Asian cities like Hyderabad and Dhaka have struggled with providing municipal water. The reason isn’t that we don’t know what an efficient water system looks like, or lack the technology to build it. Nor is it a simple lack of resources: multilateral development institutions have been willing to fund water projects for years.

The obstacles are different, and are entirely political, social, and cultural. Residents of these cities have the capacity to pay more for their water, but they don’t trust their governments not to waste resources on corruption or incompetent management. Businesses don’t want the disruption of pervasive infrastructure construction, and many cities host “water mafias” that buy cheap water and resell it at extortionate prices to poor people. These mafias are armed and ready to use violence against anyone challenging their monopolies. The state is too weak to control them, or to enforce the very good laws they already have on their books.

It is hard to see how even the most superintelligent AI is going to help solve these problems. And this points to a central conceit that plagues the whole AI field: a gross overestimation of the value of intelligence by itself to solve problems.

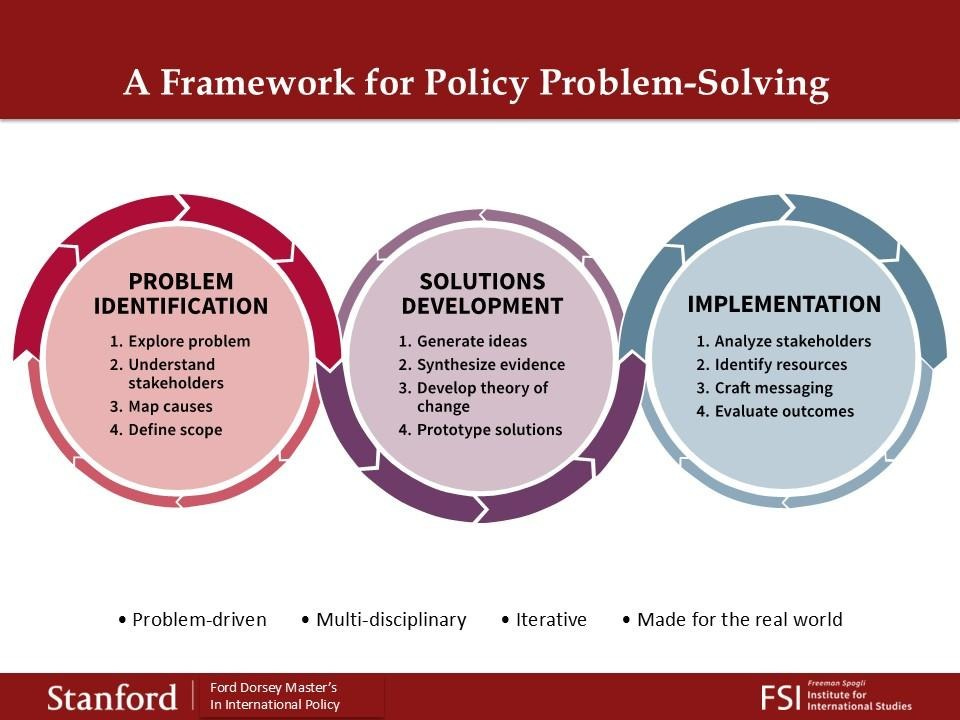

In the teaching I’ve done over the past two decades, and in the Master’s in International Policy program I direct at Stanford, I’ve helped develop a public problem-solving framework that we now teach to all our students. (Credit here also goes to my former colleague Jeremy Weinstein, who is now Dean of Harvard’s Kennedy School of Government.) The framework is simple, and consists of three circles:

There is a problem that extends way beyond AI, and applies to the way we think about public problem-solving in general. The bulk of effort, and what most academic public policy programs seek to teach, centers on the first two of the three circles: Problem Identification and Solutions Development. Indeed, many programs focus on Solutions Development exclusively: they teach aspiring policy-makers how to gather data and use a battery of powerful econometric tools to analyze it. This yields a set of optimal solutions that a policy analyst can hand to his or her principal as a way forward.

What is missing from this approach is what lies in the third circle: implementation. Our budding policy analyst typically finds that after handing a brilliant options memo to the boss, nothing happens. Nothing happens because there are too many obstacles—political, social, cultural—to carry out that preferred policy, as in the municipal water example.

So let’s go back to how AI will play in this space. AGI will definitely help in the first circle: identifying stakeholders, mapping a causal space, and defining the problem. It will be of most help in the second circle: gathering data and analyzing it to come up with optimal solutions. But intelligence only gets you to the end of the second circle, and is of limited help in the third. An LLM cannot directly interact with stakeholders, message them, or come up with resources. In particular, an LLM will not be able to engage in the kind of iterative back-and-forth between policymakers and citizens that is required for effective policy implementation. It will likely face big challenges in generating the kind of trust that is necessary for policies to be accepted and adopted.

It is not just political and social obstacles that AI has difficulty dealing with; LLMs have limited ability to directly manipulate physical objects. AI interacts with the physical world primarily through robotics, but the latter is a field that has lagged considerably behind the development of LLMs. Robots have proliferated enormously over the past decades and are omnipresent in manufacturing, agriculture, and many other domains. But the vast majority of today’s robots are programmed by human beings to do a limited range of very specific tasks. The world was wowed recently by Chinese humanoid robots doing kung fu moves, but I suspect the robots didn’t teach themselves how to act this way.

Robotically-enabled LLMs do not have the ability to solve even simple physical problems that are novel or outside of their training set. My colleague Alex Stamos, a noted expert in cyber security, puts it this way: “my dog knows more physics than an LLM.” An LLM would be able to state Newton’s laws of motion, but it would not be able to direct a robot to chase a frisbee the way Alex’s dog can because that particular set of moves is not in its training set. It could be programmed to do this, but that is the product of human intelligence and not AI.

Here’s an example of AI’s current limitations. I recently had an HVAC contractor replace the furnace in my house. The contractor photographed and measured the house’s layout; he had to route the new ducts and wiring in ways specific to my house’s design. It turned out that the new furnace would not fit through the existing attic door; he had to cut a larger opening with a reciprocating saw, and then repair the doorframe after the new unit was inside. There is no robot in the world that could do what my contractor did, and it is very hard to imagine a robot acquiring such abilities anytime in the near future, with or without AGI. Robots may get there eventually, but that level of human capacity remains a distant objective.

Many of the enthusiasts hyping AI’s capabilities think of policy problems as if they were long-standing problems in mathematics that human beings had great difficulties solving, such as the four-color map theorem or the Cap Set problem. But math problems are entirely cognitive in nature and it is not surprising that AI could make advances in that realm. The people building AI systems are themselves very smart mathematically, and tend to overvalue the importance of this kind of pure intelligence.

Policy problems are different. They require connection to the real world, whether that’s physical objects or entrenched stakeholders who don’t necessarily want changes to occur. As the economic historian Joel Mokyr has shown, earlier technological revolutions took years and decades to materialize after the initial scientific and engineering breakthroughs were made, because those abstract ideas had to be implemented on a widespread basis in real world conditions. AI may move faster on a cognitive level, but it may not be able to solve implementation problems more quickly than in previous historical periods.

This is not at all to say that AI will not be hugely transformative. But the kind of explosive, self-reinforcing AI advances that some observers predict are on the way will still have to solve implementation problems for which machines are not well suited. A ten percent annual growth rate will double GDP in seven years. Yet planet Earth will not remotely yield the materials—water, land, minerals, energy, or people—to make this come about, no matter how smart our machines get.

Francis Fukuyama is the Olivier Nomellini Senior Fellow at Stanford University. His latest book is Liberalism and Its Discontents. He is also the author of the “Frankly Fukuyama” column, carried forward from American Purpose, at Persuasion.

Follow Persuasion on X, Instagram, LinkedIn, and YouTube to keep up with our latest articles, podcasts, and events, as well as updates from excellent writers across our network.

And, to receive pieces like this in your inbox and support our work, subscribe below:

I agree. The main point is what matters: Intellligence doesn't solve every problem. AI is great for problems that ONLY require intelligence. But how many problems is that really?

Really appreciate this essay. It gets at one of the big reasons why AI will be adopted more slowly than many think. I'm in the corporate world, where we're going insane trying to adopt it into fucking everything. Part of the problem is that all the processes, the people, the relationships, the ways of getting things done and understanding the businesses and the domains all grew up with out AI in ways that are not easily immediately adaptable to AI.

That will change. As AI gains increasing facility among a larger number of people and organizations, those organizations will slowly change their processes and data to suit AI better. But that will take time. We're not getting 10% GDP growth in 3 years.

Thanks for this rational, realistic viewpoint.